Automatic Context Compression

Agent nodes automatically compress conversation history near the model context limit, preserving the system prompt and key turns while summarizing the middle for long-running tasks.

Visual canvas, multi-agent orchestration, RAG pipelines, and MCP support. The first truly AI-native workflow automation platform.

Heym is a source-available, self-hosted platform for building AI automations on a drag-and-drop canvas. Describe your workflow in natural language and the assistant generates it, or wire it manually with a wide range of built-in nodes, including Telegram, IMAP, and outbound WebSocket nodes. Deploy with Docker Compose or Kubernetes, keep full control of your data, and license commercial redistribution separately when you need it.

Heym is an AI-native low-code automation platform with a visual workflow editor. It supports LLM nodes for text generation and vision, Agent nodes with tool calling and Python tools, Qdrant RAG for semantic search, MCP client and server integration, human-in-the-loop approval checkpoints, content guardrails, parallel DAG execution, a skills system for portable agent capabilities, Playwright browser automation with auto-heal, and integrations with Telegram bots, Slack, IMAP inboxes, outbound WebSocket streams, email, Redis, RabbitMQ, Grist, and any HTTP API. Self-host with Docker Compose or Kubernetes on your own infrastructure.

Integrates with

Heym integrates with OpenAI, Anthropic, Ollama, Cerebras, Google Gemini, OpenRouter, Slack, Gmail, Outlook, Telegram, Qdrant, Redis, PostgreSQL, RabbitMQ, WebSocket, Docker, MCP, Grist, Google Sheets, BigQuery, Playwright.

Not just automation tools with AI added on top. Heym is built from the ground up with AI as the execution model.

Agent nodes automatically compress conversation history near the model context limit, preserving the system prompt and key turns while summarizing the middle for long-running tasks.

Agent nodes can pause at approval checkpoints, generate a public review link, and wait for a reviewer to accept, edit, or refuse before resuming execution.

Block unsafe or unwanted content before it reaches an LLM. Choose categories like violence, hate speech, or harassment and set sensitivity to low, medium, or high per node.

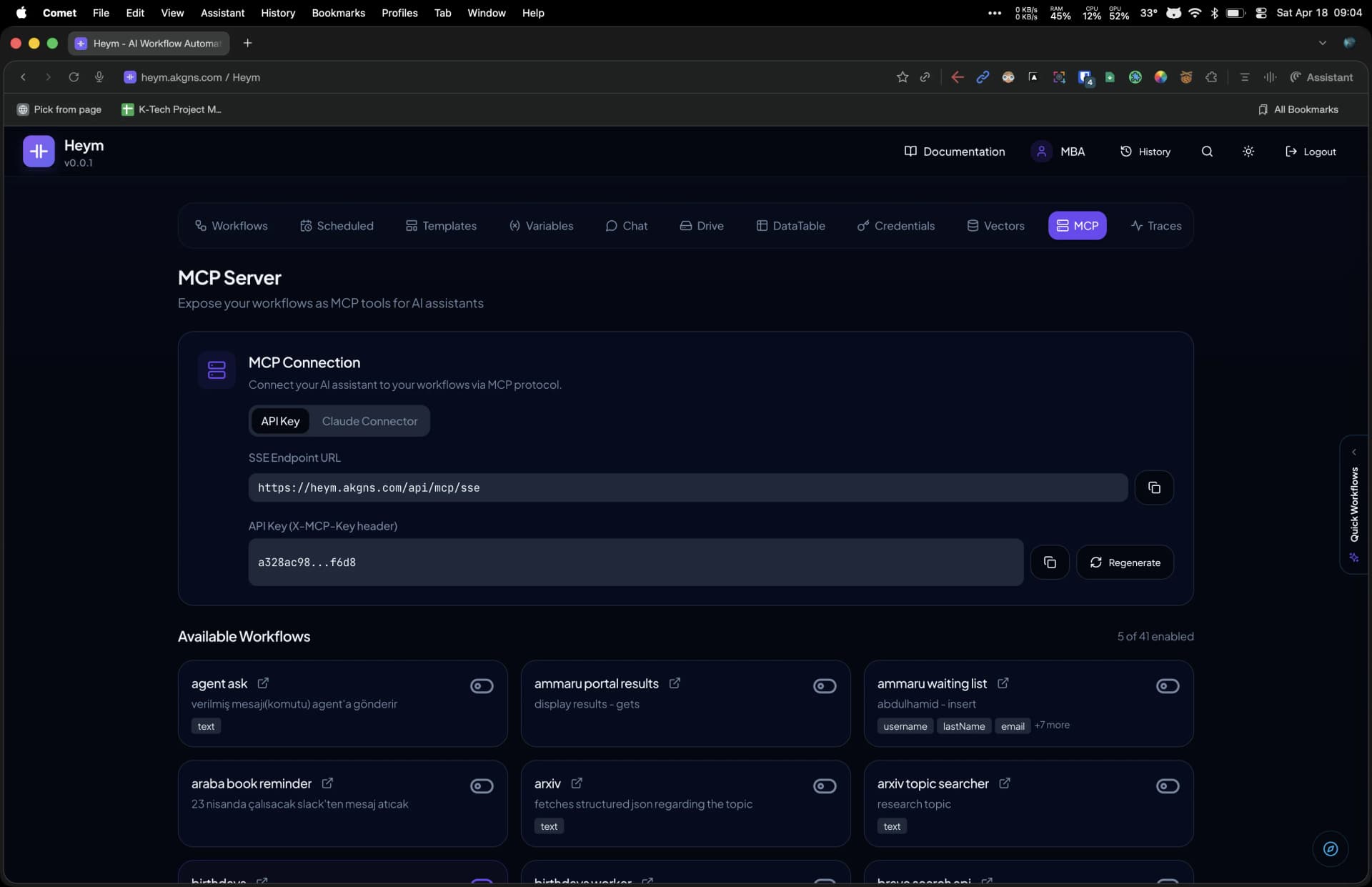

Connect agents to any MCP server to gain external tools, or expose your Heym workflows as an MCP server for Claude Desktop, Cursor, and other clients.

Turn any workflow into a public chat UI at a custom slug URL. Supports streaming responses, file uploads, image output display, and optional per-user authentication.

Portable capability bundles consisting of a SKILL.md instruction file and optional Python tools. Drag and drop a .zip or .md onto any Agent node, or use AI Build to draft and revise skills with live file previews.

When a Playwright selector breaks at runtime, the AI step automatically finds an alternative selector and retries the action, keeping browser automations resilient to UI changes.

See all active cron workflows on a day, week, or month calendar. Each block shows the workflow name and cron expression on hover — click to jump straight to the canvas.

The engine builds a directed acyclic graph and runs independent nodes concurrently with a thread pool. Streaming mode emits node events as each step completes, including webhook SSE consumers using custom configurable endpoints.

JWT authentication with HttpOnly cookies and refresh token rotation. Credentials encrypted at rest with AES-256 Fernet. Rate limiting on login, register, and portal endpoints.

Connect workflows to Telegram, Slack, IMAP, outbound WebSocket, Google Sheets, BigQuery, and more with first-party nodes for data, messaging, and realtime sync.

Define per-node error handlers and retry policies to recover from transient failures automatically. Workflow-level error handlers catch unhandled exceptions and route them to a dedicated recovery flow.

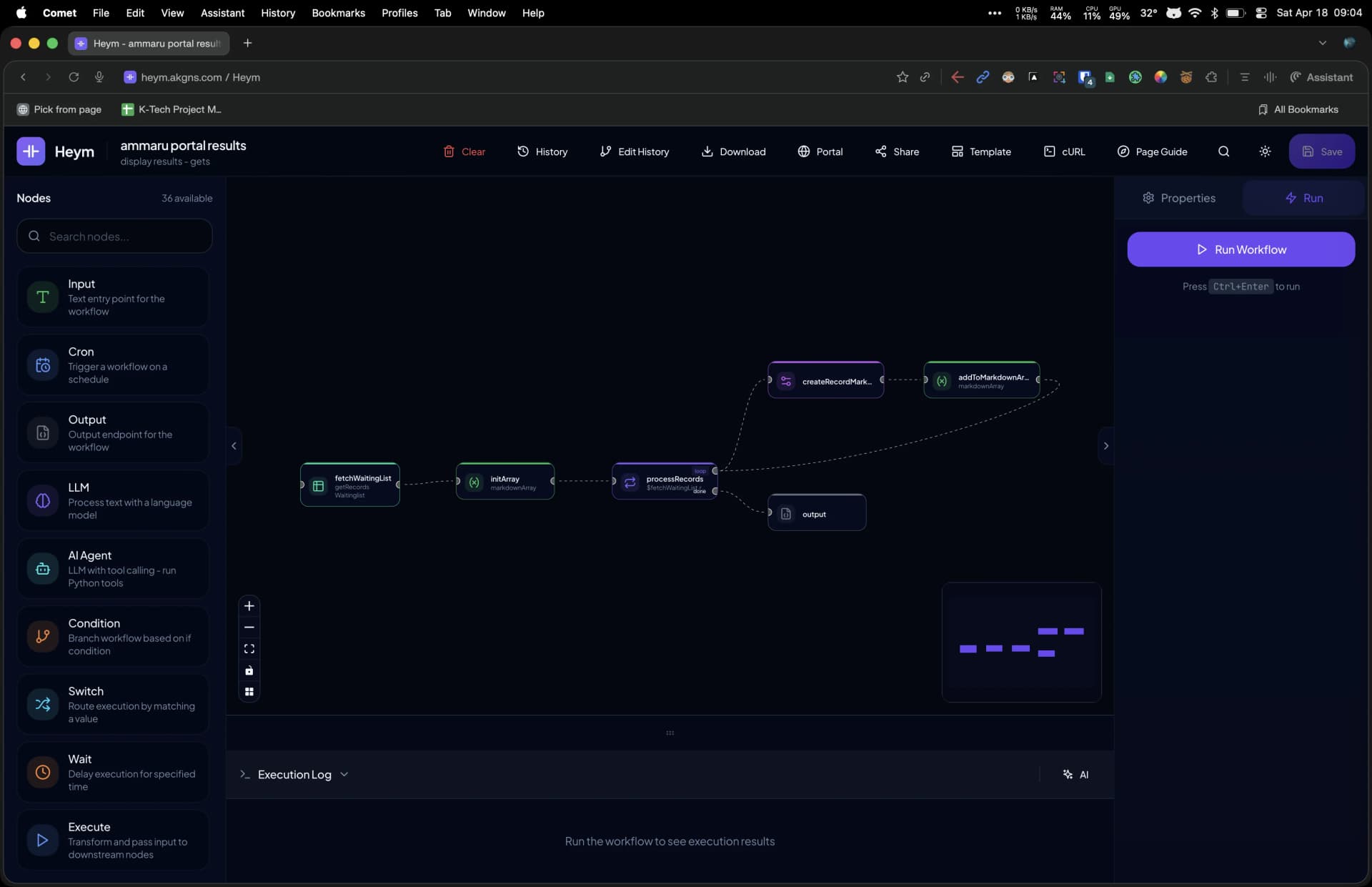

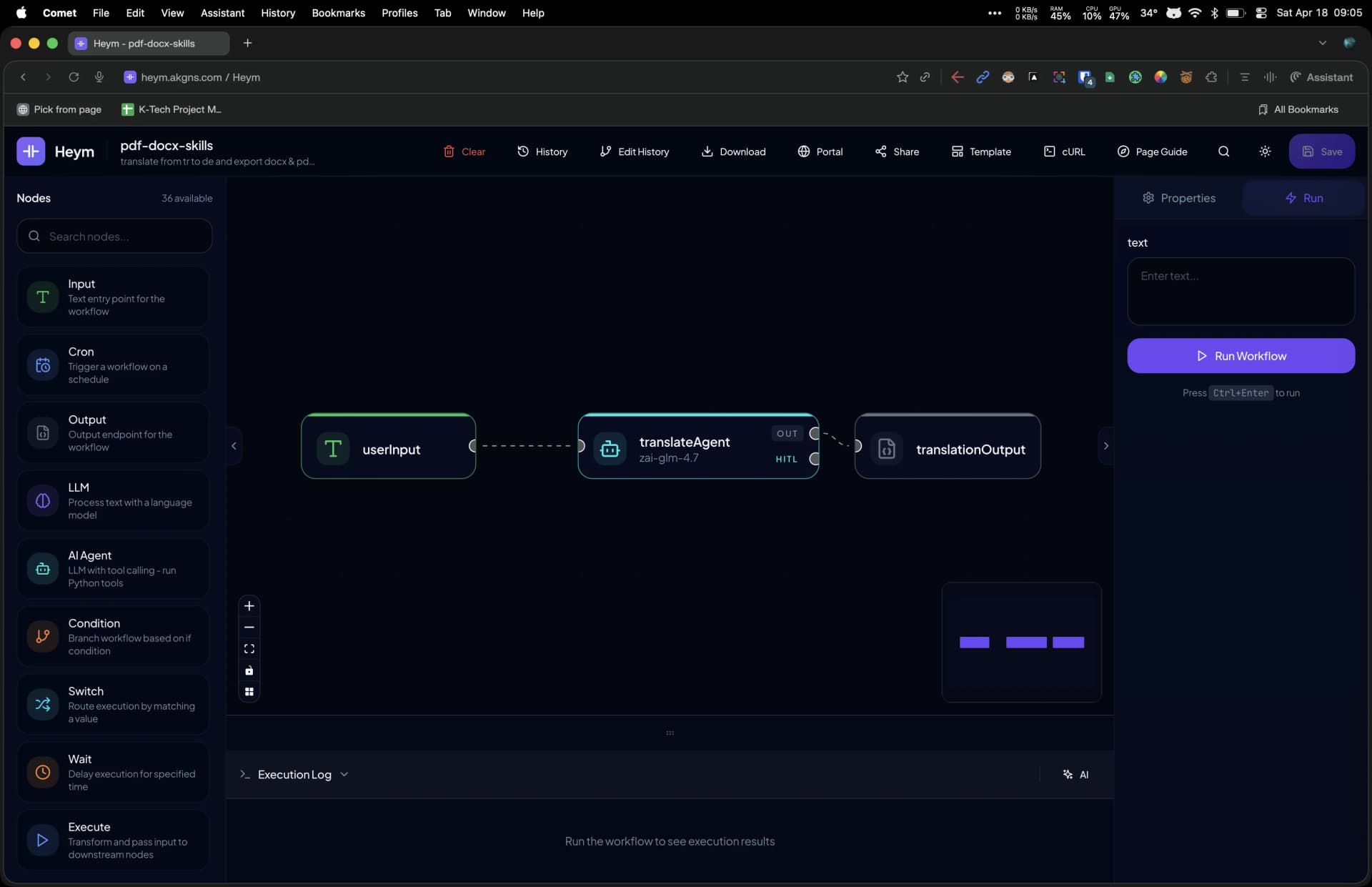

A powerful, intuitive interface designed for building complex AI workflows.

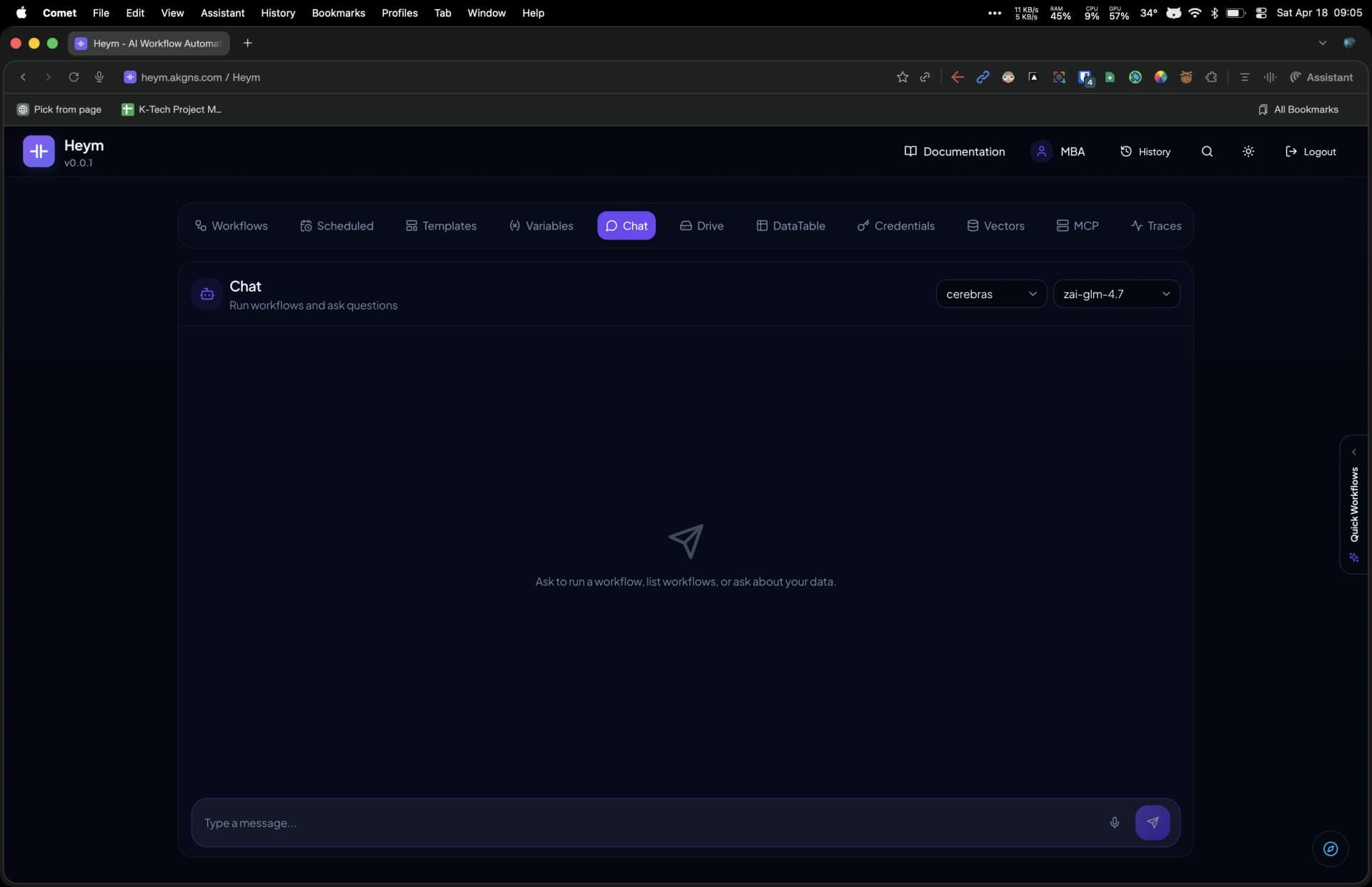

From the dashboard you manage workflows, credentials, vector stores, teams, and analytics. The visual canvas editor lets you connect many built-in node types with a drag-and-drop interface, pin node outputs for debugging, and use the AI Assistant to generate workflows from natural language.

The interface supports both light and dark themes, responsive layouts for desktop and tablet, and keyboard shortcuts for power users. Each node displays its execution status, output preview, and error state directly on the canvas. The properties panel provides inline expression editing with autocomplete for the DSL syntax, model selection dropdowns, and file upload areas for skills and RAG documents.

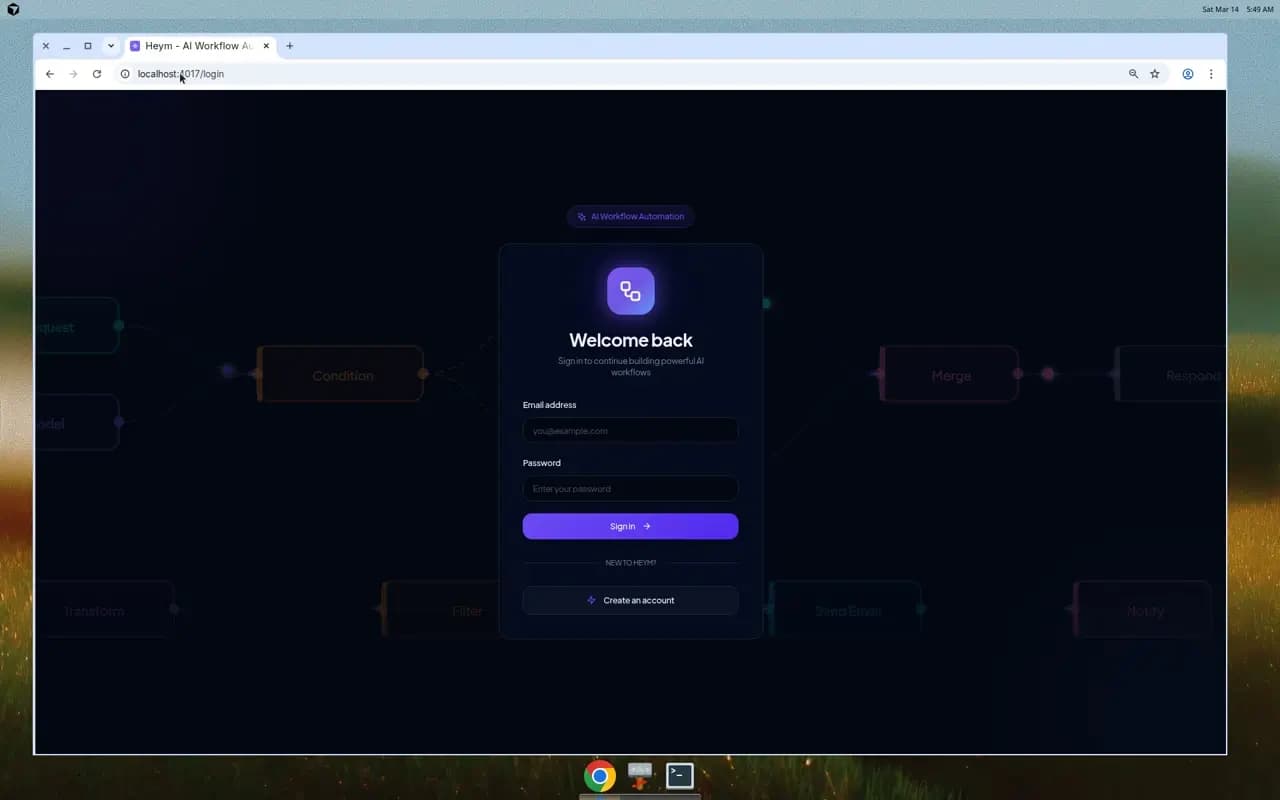

Authentication

The login screen features an animated workflow canvas in the background, previewing the drag-and-drop editor before you even sign in. JWT authentication with HttpOnly cookies ensures secure sessions. Create your account with email and password to access the full dashboard with all 14 management tabs.

What's in the box

Type or speak your automation in plain English — the Assistant generates the entire canvas: nodes, edges, credentials, expressions. Modify existing flows the same way: "add a HITL checkpoint before Output."

One orchestrator, five levels of nested sub-agents and sub-workflows. Each agent has its own model, tools, and Python execution context. Handoffs are visual — you can see the control flow, not infer it from logs.

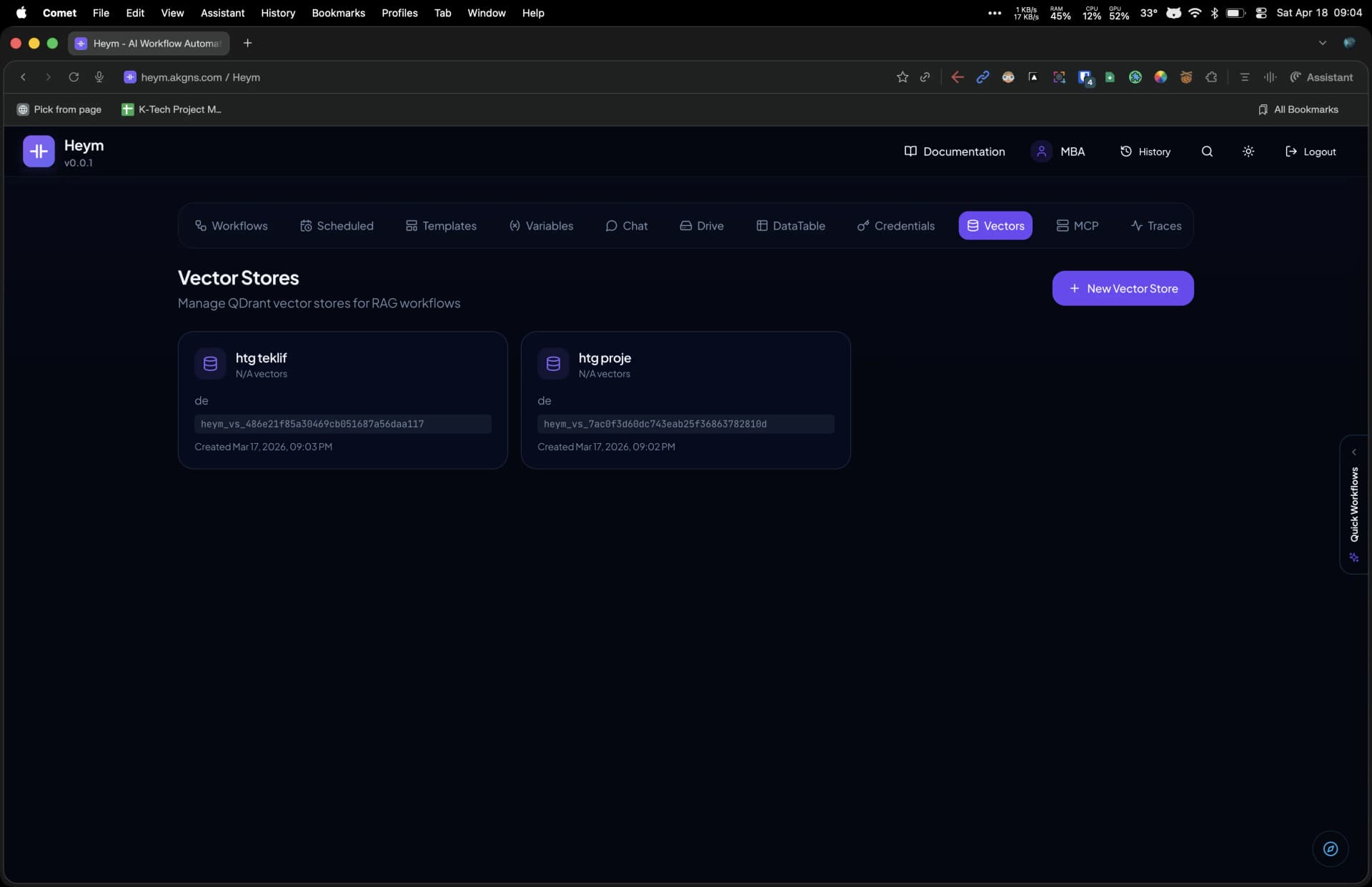

Upload PDFs, Markdown, CSVs directly. Managed Qdrant vector stores live alongside your workflows. The Qdrant RAG node wires retrieval into any flow with metadata filters and optional Cohere reranking.

Every workflow is exposable over MCP. Paste the SSE URL into Claude Desktop, Cursor, or any MCP client. Your AI can now run your automations from its own interface. Agents can also consume any external MCP server as tools.

Drop a HITL node and execution pauses, generates a public review link, sends it via your configured channel. A reviewer can accept, edit, or refuse. AI output never ships without someone accountable signing off.

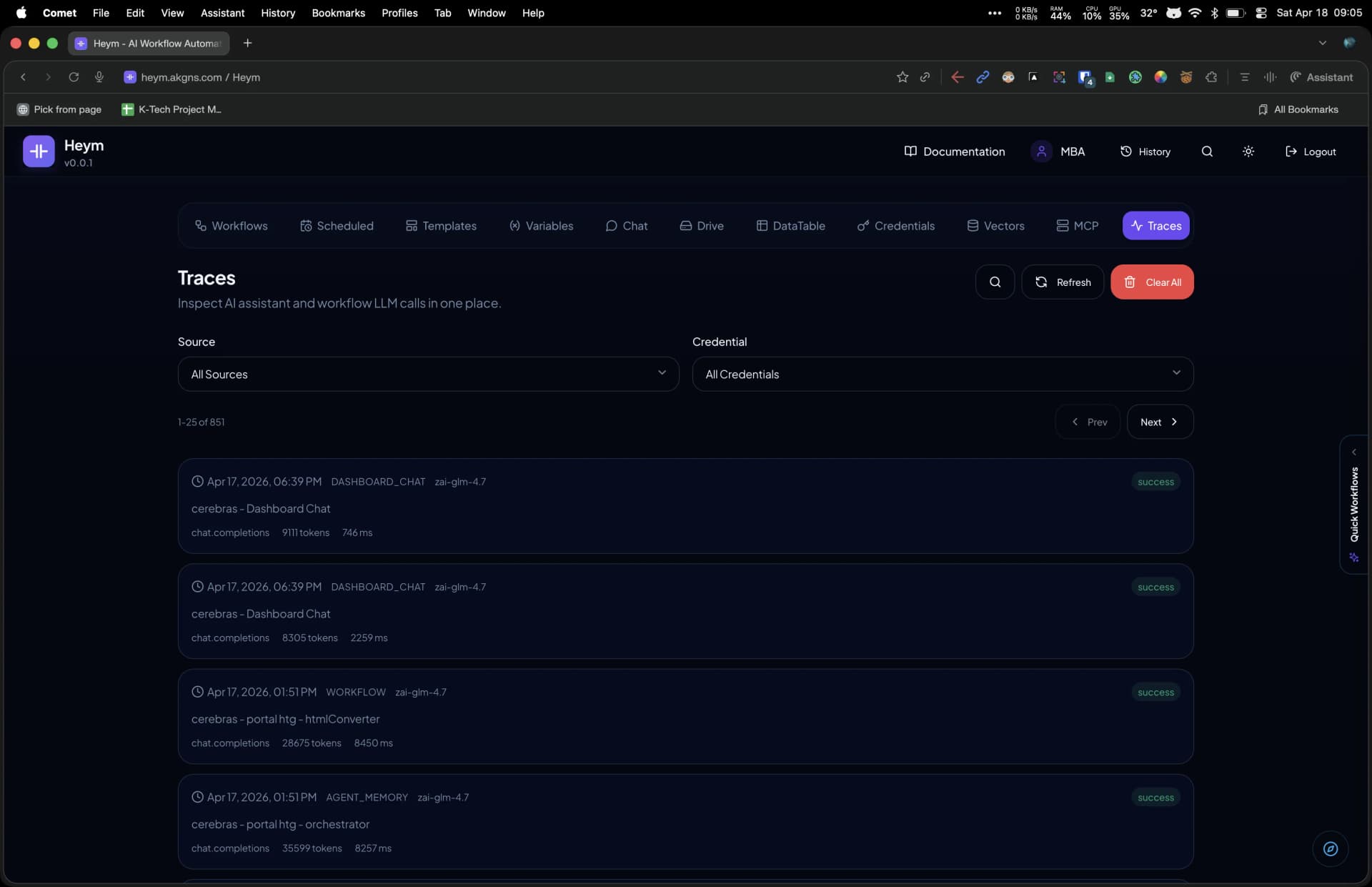

Every LLM call, every agent step, every token and millisecond — captured automatically. Filter by source, credential, model. The trace viewer shows the full prompt, response, tool calls, and timing waterfall. Replay any run against a different model.

Heym's dashboard gives you a unified control center for workflows, credentials, vector stores, teams, analytics, evaluations, and more — all accessible from a single navigation bar with 15 dedicated tabs.

Each tab is purpose-built for a specific aspect of workflow management. From organizing automations in folders to inspecting LLM traces, from managing team permissions to running evaluation suites against multiple models — the dashboard eliminates the need for external tools and keeps your entire AI automation stack in one place.

The Workflows tab supports card and list views with nested folder organization, drag-and-drop JSON import, and one-click export for backup and sharing. Credentials are encrypted at rest with AES-256 Fernet and can be shared with specific users or teams. The Analytics tab tracks execution counts, success rates, and average duration with trend analysis across 7, 30, and 90 day windows. Traces provide full request and response payloads with timing breakdowns for every LLM and tool call in your workflows.

From customer support to DevOps, Heym adapts to your workflow needs with AI-powered automation.

Each use case below shows a real workflow pattern you can build on the Heym canvas. Combine trigger nodes for scheduling or webhooks, AI nodes for language understanding and generation, logic nodes for branching and looping, and integration nodes for connecting to external services. Every workflow runs as a parallel DAG with automatic dependency resolution, so steps that can execute concurrently do so without extra configuration.

Connect anything to anything. Build complex workflows by dragging and dropping nodes onto the canvas.

Every node type in the editor is listed below — from triggers (HTTP, Telegram, Slack, IMAP, outbound WebSocket, cron, RabbitMQ, errors) to integrations such as Qdrant, RabbitMQ, WebSocket send, Google Sheets, BigQuery, Grist, Redis, and SMTP. Wire them with expressions like $nodeLabel.field. Independent branches run in parallel automatically.

Zero-input entry for HTTP and webhook runs: define typed input fields, read body and headers, and return workflow output to callers.

Start from Telegram bot webhook updates — read incoming text, callback queries, chat IDs, and sanitized headers for downstream expressions.

Start from Slack Events API requests — URL verification, signing-secret validation, and the full event payload for expressions.

Poll a mailbox on a per-node interval, parse each new email, and expose subject, bodies, headers, addresses, and attachment metadata.

Maintain an outbound client connection to an external WebSocket server and start runs on message, connected, or closed events.

Run on a schedule with standard cron expressions — no separate job runner required.

Publish to exchanges and queues or receive from a queue — one node with send/receive operations for event-driven flows.

Runs when another node fails (no incoming edge). Use error context for Slack alerts, logging, or recovery branches.

Process text with language models (OpenAI, Ollama, vLLM, Cohere, …): text and vision, optional JSON schema, and content guardrails.

LLM with Python tools, MCP clients, skills, sub-agents, human-in-the-loop review, and optional persistent memory.

Insert documents into or query a Qdrant vector store for retrieval-augmented generation with filters and optional reranking.

Branch on a boolean expression — separate true and false outputs.

See how Heym compares to other automation platforms. Built AI-first, not AI-added.

Comparison reflects publicly documented, native product capabilities reviewed on April 21, 2026. Availability may vary by plan, deployment model, or third-party integrations. “Partial” indicates limited, plan-restricted, or indirect implementation — hover for details.

Heym was designed AI-first from day one — multi-agent orchestration, built-in RAG, a portable skills system, and parallel DAG execution are core primitives, not add-ons. Combined with full self-hosting, LLM trace inspection, and a natural-language workflow builder, Heym gives teams complete control over their AI automation stack without vendor lock-in.

Every component is chosen for performance, developer experience, and reliability.

Your data, your infrastructure, your code. Self-host it anywhere, contribute to the project, and be part of something bigger.

We believe AI workflow automation should be transparent, auditable, and under your control. With full source access you can review the security model, extend the platform with custom nodes, and deploy in air-gapped environments where data sovereignty is non-negotiable.

Getting started locally still takes three commands: clone the repository, copy the example environment file, and run the setup script. The script starts PostgreSQL, runs database migrations, and launches both the FastAPI backend and the Vue.js frontend. If you prefer a prebuilt install, you can run the published container image instead.

Licensed under Commons Clause + MIT

Commercial use, enterprise licensing, and professional support for organizations of all sizes.

While Heym is source-available and free to self-host under Commons Clause + MIT, enterprise organizations often need dedicated support, commercial licensing, custom integrations, and guaranteed SLAs. Our enterprise offering provides all of this along with priority access to new features, hands-on onboarding for your team, and direct communication channels with our engineering team for rapid issue resolution.

Enterprise licensing gives your organization commercial rights, professional support, and the flexibility to customize Heym for your specific automation needs.

Answers to your common questions about Heym.

Heym is built from the ground up around AI. Other platforms now cover parts of the same surface area, but Heym keeps multi-agent orchestration, human-in-the-loop checkpoints, node-level guardrails, built-in RAG, MCP support, and a portable skills system in one runtime designed for AI workflows first.

Yes. Heym is source-available and free to self-host under the Commons Clause + MIT licensing model. You can deploy it on your own infrastructure without licensing fees. Commercial licensing and enterprise support are available for organizations that need those additional rights and services.

Absolutely. Heym is designed for self-hosting. You can deploy it using Docker Compose, Kubernetes, or any container orchestration platform. Your data stays in your infrastructure, giving you complete control and data sovereignty.

Heym supports OpenAI (GPT-4, GPT-3.5), Ollama (for local LLMs), vLLM, Cohere, and any OpenAI-compatible API. You can also configure custom endpoints for your own models.

The AI Assistant lets you describe what you want in natural language or use voice input. It analyzes your request, generates appropriate nodes and edges, and applies them directly to the canvas. No other automation platform ships a natural-language workflow builder that works directly inside the editor.

MCP (Model Context Protocol) is a standardized protocol for connecting AI assistants to tools and data. Heym supports MCP in two ways: as a client (Agent nodes can connect to any MCP server and gain all its tools), and as a server (your Heym workflows can be exposed as an MCP server for Claude Desktop, Cursor, and other clients).

Heym has built-in vector store management with QDrant. You can upload PDFs, Markdown, CSV, JSON, and other document types. The RAG node performs semantic search across your documents and returns relevant context that flows directly into your LLM or Agent nodes.

Skills are portable capability bundles. Each skill consists of a SKILL.md instruction file plus optional Python tools. You can drag and drop a .zip or .md file onto an Agent node to extend its context and toolbox. Skills enable code reuse and sharing across workflows and teams.

We love contributions! You can contribute by reporting bugs, suggesting features, submitting pull requests, improving documentation, or sharing your workflows with the community. Join our Discord to connect with other contributors and get started.

Yes! Enterprise licensing includes professional support with SLA guarantees. Contact us at [email protected] for information about commercial use, custom development, and priority support.

The Commons Clause is a license condition that restricts selling the software or offering it as a paid service. You are free to use, modify, and distribute the software, but you may not resell it. This keeps Heym transparent and accessible while preventing commercial redistribution.

Getting started is easy. Clone the repository, copy the example environment file, and run the setup script. The script starts PostgreSQL, runs database migrations, and starts both the frontend and backend servers. Open your browser at the configured port (default: 4017) and you're ready to build.

Still have questions?

Ask on DiscordEvery node, dashboard tab, and platform feature is documented with detailed guides, configuration references, and real-world examples. The documentation is built into the Heym application and available on GitHub.

From getting started tutorials that walk you through your first workflow to advanced reference material on agent orchestration, expression DSL syntax, parallel execution, and security architecture — Heym provides the documentation you need to build production-grade AI automations. The library covers dozens of node guides, 15 dashboard tabs, and over 30 reference topics.

Begin with the introduction to understand Heym's architecture, then follow the quick start guide to deploy your first instance and build a workflow in minutes.

Each node type has a dedicated documentation page covering configuration options, input and output schemas, expression examples, and common use cases. Nodes span seven categories: triggers, AI, logic, data, integrations, automation, and utilities. Node types overview

Entry point for workflows triggered by HTTP requests. Supports custom input fields, request metadata, and both synchronous and asynchronous execution modes.

Schedule-based trigger using standard five-field cron expressions. Configure hourly, daily, weekly, or custom intervals for automated recurring workflow execution.

Receive Telegram bot webhook updates and start workflows instantly. Exposes the full update payload, the primary message object, callback queries, sanitized headers, and the chat ID needed for replies.

Poll an IMAP inbox on a configurable minute interval and start workflows for newly arrived email. Exposes subject, sender, text and HTML bodies, decoded headers, and attachment metadata.

Open an outbound client connection to an external WebSocket server and trigger workflows on message, connected, or closed events. Exposes parsed message frames plus connection and close metadata.

Event-driven trigger that starts workflows when messages arrive in a RabbitMQ queue or exchange. Also supports publishing messages for asynchronous processing.

Receive Slack Events API webhooks and trigger workflows automatically. Auto-generates a static webhook URL, handles URL verification challenge, and verifies request signatures via a signing secret credential.

Automatic error recovery node that runs when any other node fails. Provides error message, failed node label, and timestamp for notifications or retry logic.

Text generation with OpenAI, Ollama, vLLM, or Cohere models. Supports text completion, vision for image analysis, image generation, structured JSON output mode, and configurable temperature.

Autonomous agent with Python tools, MCP server connections, skill attachments, sub-agent orchestration, and human-in-the-loop approval checkpoints for supervised automation.

Retrieval-augmented generation with Qdrant vector stores. Insert documents, search by semantic similarity with metadata filters, and optionally rerank results with Cohere.

If/else branching based on expression evaluation. Routes execution to truthy or falsy output handles for conditional workflow logic.

Multi-path routing by matching a value against defined cases. Includes a default handle for unmatched values, enabling complex decision trees.

Iterate over arrays executing downstream nodes per item. Provides item, index, total, isFirst, and isLast context variables for each iteration.

Wait for multiple parallel branches to complete and combine their outputs into a single object before continuing downstream execution.

Transform and map input data using key-value expression pairs. Supports string manipulation, arithmetic, array operations, and object restructuring.

Read and write workflow-local or persistent global variables that survive across executions. Shared variables accessible via $global.variableName expressions.

CRUD operations on Heym DataTables with typed columns. Read, write, update, and delete rows in structured first-party storage without external credentials.

Call another workflow as a sub-workflow, passing input via expressions and receiving the output. Enables modular, reusable workflow compositions.

Make HTTP requests with configurable method, headers, body, and authentication. Parse JSON responses and access status codes for API integration workflows.

Connect to an external WebSocket, send one text, JSON, or binary message, and continue with send status, payload size, and negotiated subprotocol metadata.

Send Telegram bot messages with dynamic chat IDs and expression-built message bodies. Ideal for bot replies, operator alerts, and chat-first automation flows.

Send messages to Slack channels via Incoming Webhooks for real-time notifications, alerts, error reporting, and team communication from workflows.

Send emails through SMTP with dynamic recipients, subject lines, and body content using expressions for transactional and alert automation.

Key-value operations including set, get, hasKey, and deleteKey with optional TTL for caching, rate limiting, and shared state between workflows.

Spreadsheet automation with Grist. Read, write, and manage records, tables, and columns for data-driven workflows backed by collaborative spreadsheets.

Read ranges, append rows, update cells, clear ranges, and inspect sheet info in Google Sheets via OAuth2. Bring your own Google Cloud app — tokens refresh automatically.

Run SQL queries against Google BigQuery datasets and insert rows via the streaming insertAll API. Authenticated via OAuth2 with automatic token refresh — bring your own Google Cloud app.

Manage skill-generated files from the workflow: delete files or update share constraints (password, expiry TTL, max downloads) using expressions and file IDs from agent output.

Web scraping with FlareSolverr proxy support. Extract content using CSS selectors, configure wait times, and retrieve raw HTML or targeted text output.

Full browser automation with configurable steps: navigate, click, type, screenshot, scroll, and AI-powered auto-heal when selectors change. Includes network capture and cookie management.

Pause workflow execution for a specified duration in milliseconds. Useful for API rate limiting, polling intervals, and timed delay between steps.

Return the workflow response to the caller. Supports async downstream mode where subsequent nodes continue running in the background after the response is sent.

Build a JSON object from key-value mappings like Set. When it is the only terminal node, webhook and run responses return that object as the top-level body without label or result wrapping.

Log expression values to the backend console for debugging during development. Outputs appear in Docker container logs without affecting the workflow.

Stop execution immediately with a custom HTTP status code and error message. Used for input validation, access control, and conditional error handling.

Permanently disable another node by label at runtime. Useful for one-time operations like stopping a Cron trigger after a condition is met.

Add markdown documentation to the canvas for team communication, workflow instructions, and implementation notes without affecting execution flow.

The Heym dashboard organizes all platform management into 15 dedicated tabs accessible from a single navigation bar. Each tab has its own documentation page explaining features, permissions, and usage patterns.

Central hub for creating, organizing, and managing workflows. Supports card and list views, folder and sub-folder organization, drag-and-drop JSON import, and one-click export.

Save and reuse entire workflows or individual node configurations as templates. Share with everyone or specific users and teams for consistent automation patterns.

Manage persistent global variables that survive across workflow executions. Store counters, configuration values, and shared state accessible via $global.variableName.

Direct LLM chat interface for testing models, prototyping prompts, and asking questions without building a full workflow. Supports streaming, markdown, images, and voice input.

Securely manage API keys and secrets used by nodes. Values are encrypted at rest with AES-256 Fernet, masked in the UI, and shareable with individual users or teams.

Create and manage Qdrant vector stores for RAG pipelines. Upload documents in PDF, Markdown, CSV, JSON, and other formats, and share stores with team members.

Configure Model Context Protocol integration to connect agents to external tool servers. Expose workflow tools via MCP for Claude Desktop, Cursor, and other compatible clients.

Inspect LLM execution traces with full request and response payloads, timing breakdowns, tool call details, and skills included in each invocation for debugging.

Execution metrics including total runs, success rates, average duration, and trend analysis. Filter by time range or workflow, and track performance across 7, 30, or 90 day windows.

Create evaluation suites with test cases for systematic AI workflow testing. Run against multiple models, compare pass/fail rates, and use LLM-as-Judge scoring.

Create teams and add members by email to share workflows, credentials, variables, templates, and vector stores. All members automatically gain access to shared resources.

Create structured data tables with typed columns including string, number, boolean, date, and JSON. Inline editing, CSV import/export, and read or write sharing permissions.

Browse all files generated by skills across your workflows. Search, download, share, or delete files with metadata including source node, creation date, type, and size.

See all active cron workflows on a day, week, or month calendar. Each block shows the workflow name and cron expression — hover to preview, click to jump to the canvas.

View Docker container logs for the entire Heym stack including backend, frontend, and PostgreSQL. Filter by container and log level for infrastructure troubleshooting.

Advanced reference documentation covering the AI assistant, agent architecture, canvas features, guardrails, parallel execution engine, expression DSL, credential sharing, portal chat UI, skills system, keyboard shortcuts, and security hardening.

Natural language workflow builder inside the canvas editor. Describe what you want and the AI generates nodes and edges automatically. Supports voice input and streaming responses.

Deep dive into agent orchestration patterns including lead/sub-agent delegation, tool calling, MCP integration, skills system, and configurable execution limits.

Visual editor capabilities including drag-and-drop node placement, edge routing, zoom controls, minimap, snap-to-grid, node pinning, and output inspection panels.

Content safety filtering for LLM and Agent nodes. Blocks violence, hate speech, sexual content, prompt injection, and other unsafe categories with configurable error routing.

DAG-based scheduling that automatically runs independent nodes concurrently in a thread pool. Downstream nodes start as soon as dependencies complete.

Approval checkpoints that pause workflow execution and generate a public review link. A human reviewer can approve or reject the pending action before it continues.

Reference upstream data with $nodeLabel.field syntax. Supports string interpolation, ternary operators, array methods, object access, and built-in helper functions.

Encrypted credential storage with AES-256 Fernet. Share API keys with individual users or entire teams while keeping secret values masked and secure.

Turn any workflow with an Input and Output node into a public chat interface. Embed on websites or share a link for end-user self-service powered by your workflows.

Skill-generated files in the Drive tab: search, download, share links with optional password and expiry. Use the Drive node in workflows to delete files or update access constraints programmatically.

Portable capability bundles consisting of SKILL.md instructions and optional Python tools. Drag and drop onto Agent nodes to extend context and toolbox.

Power user shortcuts for node selection, deletion, duplication, undo/redo, zoom, panning, and canvas navigation to speed up workflow creation.

Track all changes to workflows with timestamps and user attribution. Restore previous versions and compare differences between workflow revisions.

Configure JSON and SSE webhook endpoints, Telegram and Slack bot webhooks, cron schedules, IMAP mailbox polling, outbound WebSocket client triggers, and RabbitMQ consumers for event-driven automation.

Stream workflow execution over stream with execution_started, node_start, node_complete, and execution_complete events. The editor can generate ready-to-run cURL commands and per-node start messages.

Personal configuration including theme, default LLM provider, API key management, notification preferences, and display options for the canvas editor.

Security architecture overview covering JWT authentication, HttpOnly cookies, credential encryption, role-based access control, and deployment hardening recommendations.

Organize workflows with folders, sub-folders, tags, and pinned favorites. Search workflows with the command palette and filter by status or recent activity.

Connect with developers, share workflows, and shape the future of AI automation.

Heym is built in the open by a growing community of contributors and users. Whether you want to report a bug, suggest a new node type, share a workflow template, or contribute code, there are multiple ways to get involved. Join the Discord for real-time discussions, browse the GitHub repository for source code and issues, or dive into the documentation to learn every feature in depth.

Clone the repo, configure your environment, and build your first AI workflow in under five minutes with step-by-step instructions.

Detailed reference for the full node library organized by category: triggers, AI, logic, data, integrations, automation, and utilities.

Setup Telegram, Slack, IMAP, outbound WebSocket clients, Redis, RabbitMQ, Grist, email, and HTTP integrations with concise guides and examples.

Comprehensive overview of all Heym features including workflows, agents, RAG, skills system, and advanced capabilities.

Ready-made workflows for common AI automation patterns. Download, paste onto the canvas, and run in minutes.

Send an array through the OpenAI Batch API, branch on live status updates, and collect the final per-item results.

Watch a shared mailbox, summarize incoming support email, and route urgent messages to Slack.

Pull live weather (no API key) from Open-Meteo for any city coordinates — great for travel bots and dashboards.

Turn messy meeting notes into structured JSON tasks with the LLM node's JSON output mode — no image pipeline required.

Two-stage pipeline: one LLM pulls facts and bullets, the next turns them into a polished paragraph for blogs or newsletters.

Classify incoming Slack messages with an LLM and auto-route urgent tickets to a priority channel.

Guides, tutorials, and deep dives on AI workflow automation, self-hosted agents, and building with Heym.

AI agent memory: 3 types explained, architecture patterns, and no-code implementation in Heym's visual canvas.

Discover 12 real-world AI agent use cases across customer support, research, DevOps, and more — with step-by-step guidance to build your first agent in Heym.

Learn how multi-agent AI systems work, the 4 core orchestration patterns, and how to build one in Heym's visual canvas — no code required.

Get the latest news, feature releases, and AI automation tips delivered to your inbox.

Subscribe to the Heym newsletter for monthly updates on new node types, agent orchestration improvements, community workflow highlights, and upcoming features. We respect your inbox and only send content that helps you build better AI automations.

One runtime. Your infrastructure. Every feature in the open.

Docker Compose · K8s Helm · MIT + Commons Clause

Heym is a source-available AI-native workflow automation platform that lets you build, visualize, and run intelligent pipelines without writing code. Using a drag-and-drop canvas, you connect a broad library of node types across seven categories — triggers, AI, logic, data, integrations, automation, and utilities — into production-grade workflows that run on your own infrastructure.

Every workflow is a directed acyclic graph (DAG) that Heym compiles, validates, and executes in parallel where dependencies allow, giving you maximum throughput without manual parallelism boilerplate.

The AI Assistant accepts natural language or voice input and generates an entire workflow — nodes, edges, and configuration — applying it to the canvas instantly. You can describe a support ticket triage pipeline, a document intelligence workflow, or a multi-agent research system in plain English and have a working prototype in seconds.

The assistant streams its response and automatically parses and applies any valid workflow JSON. Voice input is processed in the browser, transcribed locally, and sent to the backend alongside your existing canvas state so the assistant can modify, extend, or refactor any part of the current workflow.

Heym supports multi-agent orchestration with up to five levels of nesting. An orchestrator agent can delegate tasks to named sub-agents and sub-workflows, passing context and receiving results.

Each agent supports Python tool calling, MCP server connections, portable skills bundles, human-in-the-loop approval checkpoints that generate a public review link, and automatic context compression for long-running tasks. Content guardrails sit between the LLM output and downstream nodes, rejecting non-compliant responses before they propagate through the rest of the workflow.

Built-in RAG pipelines connect to managed Qdrant vector stores. Upload documents, configure chunking and embedding, and enable semantic search with metadata filters and optional Cohere reranking — all from the canvas without custom code.

Execution traces record the full request and response payload, token usage, tool calls, and timing for every LLM invocation, making production debugging straightforward. The trace viewer shows a waterfall timeline of every node execution, color-coded by status. You can replay any historical execution against a different model or configuration directly from the trace view.

Heym compares favorably to n8n, Zapier, and Make.com as an AI-first alternative. Unlike general automation tools that added AI as a plugin, Heym was designed from the ground up for LLM orchestration, multi-agent coordination, and RAG pipelines.

Heym is self-hostable with Docker Compose or Kubernetes, keeping all data within your infrastructure perimeter — a requirement for teams processing PII, financial records, or proprietary code. The source code is available on GitHub under the Commons Clause and MIT license. Enterprise customers receive a commercial license with SLA-backed support and a dedicated Slack channel.

Trigger nodes include Webhook, Telegram, Cron, IMAP, outbound WebSocket Trigger, RabbitMQ, and Error Handler. Webhook triggers support path prefixes, authentication tokens, and response templates so callers receive a structured reply while the workflow continues asynchronously.

Cron triggers support both simple intervals and full POSIX cron expressions with timezone support. IMAP triggers poll a mailbox on a configurable minute interval. WebSocket Trigger nodes connect Heym to an external socket server and can fire on message, connected, or closed events. The node configuration panel keeps all trigger settings editable on-canvas.

The AI node category provides LLM, AI Agent, and Qdrant RAG nodes. The LLM node supports OpenAI GPT-4o, GPT-4, GPT-3.5 Turbo, Ollama local models, vLLM, Cohere Command, and any OpenAI-compatible API endpoint. You configure system prompt, temperature, max tokens, and response format per node. For large prompt lists, optional batch mode uses OpenAI’s Batch API for efficient bulk runs with a dedicated status branch for progress and side effects.

The AI Agent node wraps an LLM with a tool-calling loop, connecting to Python tools, HTTP tools, or any MCP server. Agent nodes support streaming output, context compression, and per-invocation guardrails. The Qdrant RAG node performs dense, sparse BM25, or hybrid search, returning ranked chunks with metadata and scores.

Logic nodes include Condition, Switch, Loop, and Merge. The Condition node evaluates a boolean expression and routes execution to one of two branches. Switch routes to one of up to sixteen named branches based on a value match or regex pattern.

The Loop node iterates over an array, executing its child sub-graph once per item and collecting results. The Merge node waits for multiple upstream branches and combines their outputs using concatenation, deep merge, or a custom merge function written in the expression DSL.

Data nodes include Set, Variable, DataTable, and Execute. The Set node assigns named variables from the expression DSL, prior node outputs, or hardcoded literals. The Variable node provides a workflow-scoped mutable store that persists across loop iterations and sub-agent calls.

The DataTable node provides a structured relational table within the workflow, supporting append, query, update, and delete without an external database. The Execute node runs shell commands or Python scripts in an isolated subprocess, capturing stdout, stderr, and exit code as outputs.

Integration nodes include HTTP, WebSocket Send, Telegram, Slack, Send Email, Redis, Grist, Google Sheets, and BigQuery. The HTTP node supports GET, POST, PUT, PATCH, DELETE, and HEAD with configurable headers, authentication (Bearer, Basic, API Key), and response parsing (JSON, text, binary).

The WebSocket Send node opens an outbound client connection to an external socket, sends one text, JSON, or binary message, and returns delivery metadata. The Slack node sends messages, updates existing messages, and posts file uploads via a bot token. Send Email connects to SMTP with TLS support and renders HTML or plain-text templates. Redis supports GET, SET, DEL, LPUSH, RPOP, PUBLISH, and arbitrary command passthrough.

Google Sheets reads ranges, appends and updates rows, and inspects sheet metadata via OAuth2. The BigQuery node runs SQL queries and inserts rows via the streaming insertAll API with automatic OAuth2 token refresh. Grist reads and writes rows in Grist data documents using the Grist API.

Automation nodes include Crawler and Playwright. The Crawler node fetches URLs using a configurable HTTP client, extracts content with CSS selectors or XPath, and returns structured data arrays.

The Playwright node launches a Chromium browser, navigates to a URL, and executes interaction steps such as click, fill, select, and screenshot. It includes an AI auto-heal feature that regenerates broken selectors using a vision model when a step fails due to a DOM change.

Utility nodes include Output, Wait, Console Log, Throw Error, Disable Node, and Sticky Note. The Output node marks the terminal result of a workflow execution. The Wait node pauses execution for a configurable duration or until a webhook callback arrives, enabling human-in-the-loop patterns.

Console Log emits messages to the execution trace for debugging. Throw Error terminates the current branch with a typed error that upstream Error Handler nodes can catch. Disable Node temporarily removes a node from execution without deleting it. Sticky Note attaches a text annotation to the canvas for documentation.

MCP integration works in both directions. As an MCP client, Agent nodes connect to any external MCP server and gain access to all tools it exposes. Heym automatically discovers available tools on connection and presents them in the agent configuration panel.

As an MCP server, Heym exposes all published workflows as callable tools over the MCP protocol. Claude Desktop, Cursor, VS Code extensions, and other MCP-compatible clients can invoke your workflows directly from their native interfaces.

The skills system enables portable, reusable capability bundles for Agent nodes. A skill is a zip archive or Markdown file containing a SKILL.md instruction document and optional Python tool files. Dragging a skill onto an Agent node extends that agent's system context and toolbox without modifying the node configuration.

Skills can define custom tool schemas, persistent memory structures, and multi-step reasoning templates. Agent nodes include an AI Skill Builder modal for drafting and revising skills with live previews. The same skill can be shared across multiple agents and workflows for consistent behavior across an organization.

The Portal Chat UI turns any workflow into a standalone chat interface that can be embedded in a web application or shared as a public URL. Portal workflows receive user messages, process them through AI and logic nodes, and stream responses back in real time.

Each portal instance is isolated with its own session state, making it suitable for customer-facing applications, internal support tools, and interactive demos. Portal sessions persist conversation history and support multi-turn interactions with context carried across turns.

The Evaluation Suite lets you measure workflow quality systematically. Define a dataset of input-output pairs, select LLM judge models, and run the suite against any workflow version. Heym records pass rates, latency distributions, token costs, and judge scores per item in a dashboard.

Evaluation results are versioned so you can compare performance across workflow iterations, prompt changes, or model upgrades before promoting a new version to production.

Deployment options include Docker Compose for single-server installations and Kubernetes Helm charts for cloud-native deployments. The Docker Compose setup starts PostgreSQL, the FastAPI backend, and the Vue.js frontend with a single command.

The backend exposes a REST API at /docs plus SSE-backed streaming endpoints for real-time workflow progress. Horizontal scaling is supported by running multiple backend replicas behind a load balancer, with execution state stored in PostgreSQL and Redis for cross-replica coordination.

Teams and credential sharing allow organizations to collaborate on workflows securely. Credentials such as API keys, OAuth tokens, SMTP passwords, and database connection strings are stored encrypted in PostgreSQL and referenced by name in node configurations.

Team members with the appropriate role can use shared credentials without seeing the underlying secret values. Workflow ownership, edit permissions, and execution access are controlled per team, enabling safe delegation of automation development across large engineering organizations.